Valeo.ai

Since 2017, our artificial intelligence research center has been at the forefront of AI research in the automotive industry, especially in the fields of assisted and autonomous driving. Twelve years ago there was no real AI in cars. Today, most new cars are packaged with software, much of it AI-related.

Connected to the whole academic world worldwide, our Artificial Intelligence Research Center is committed to cutting-edge automotive applications. We are spearheading ambitious research in AI, especially in assisted and autonomous driving. Leveraging state-of-the-art AI, we pioneer advances that redefine the future of automotive.

Scientific research

Valeo.ai tackles the key challenges that autonomous vehicles encounter in everyday driving. Advanced Driving Assistance Systems sometimes fall short in accuracy and reliability, particularly under complex scenarios of malfunctioning traffic lights, missing lane markings, adverse weather conditions, and other road users behaving abnormally.

Our mission is to overcome those obstacles and enhance automated driving, enabling safer and more efficient autonomous travel in any environment, worldwide.

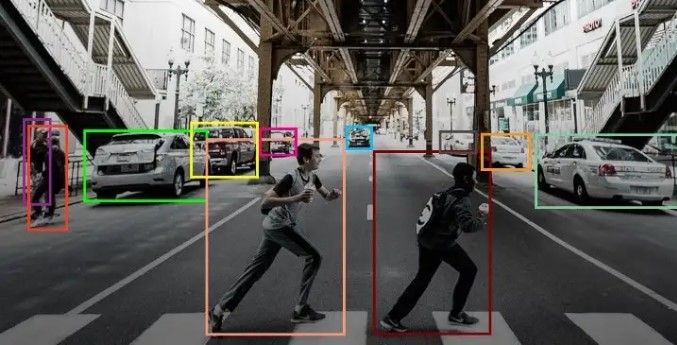

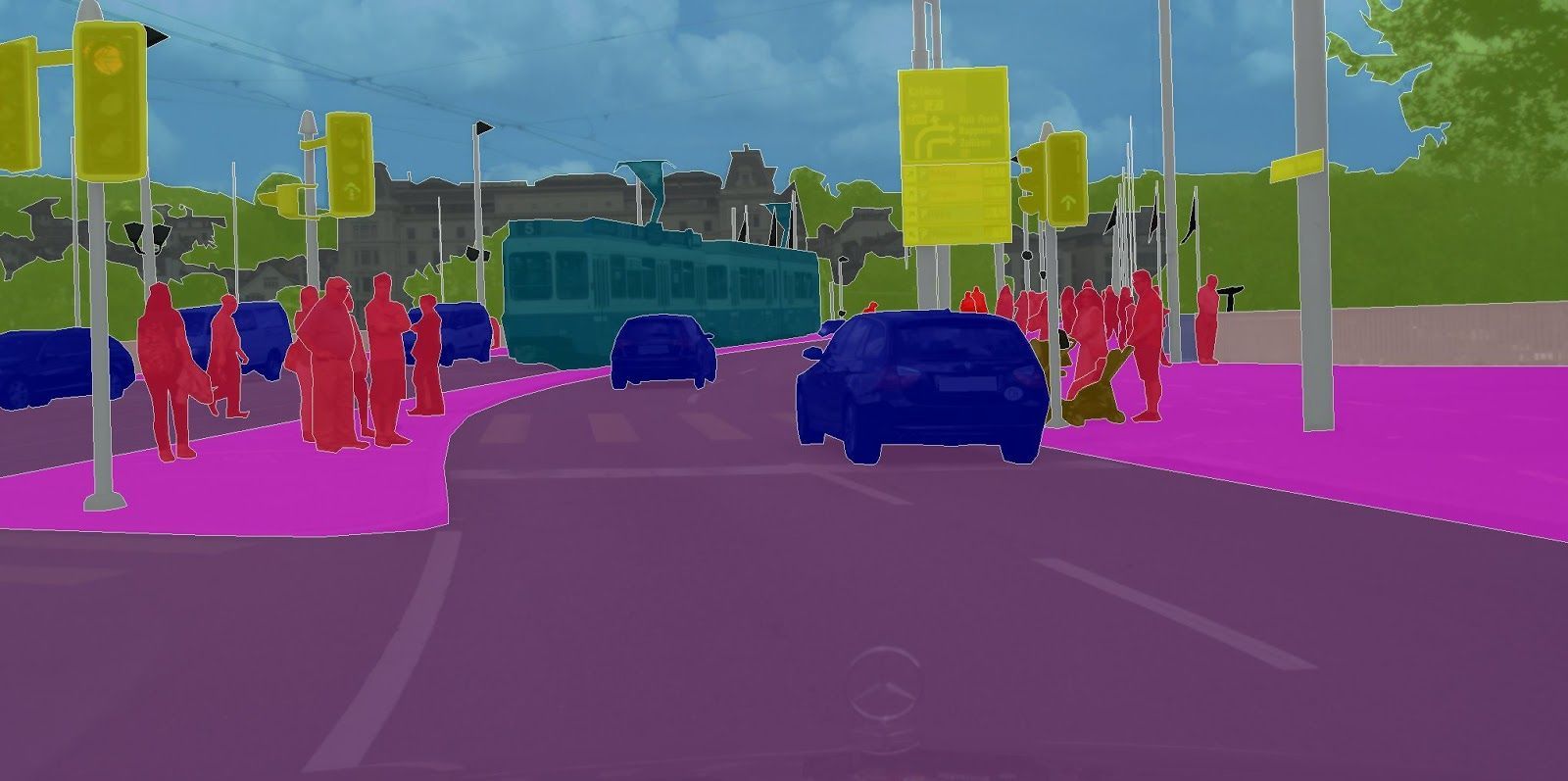

Scene understanding through multiple arrays of sensors

Autonomous vehicles are equipped with various sensors, including cameras, LiDARs (Light Detection And Ranging), radars, ultrasonic sensors, and inertial measurement units, which collectively provide a comprehensive understanding of the environment.

The data from these sensors are fused to create a map of the surroundings, crucial for the vehicle to perceive and understand its environment.

Data & annotation efficient learning

Collecting and annotating large datasets is costly and time-consuming. Our researchers are exploring alternatives to traditional fully-supervised learning, thus alleviating the annotation costs.

Research in open-world perception is also concerned with building models that can detect and adapt to novel objects and situations, while still providing safe and consistent operations within real-world dynamic environments.

Dependable models

Autonomous vehicles are mission-critical devices that require the utmost care in their design for a safe and robust deployment.

Self-driving vehicles must drive with confidence in contexts that are new or unexpected in comparison to their training scenarios, a goal assisted by domain generalization. That involves building systems that can adapt their learning to new environments, with reliable results in practical scenarios.

Our research also comprises methods to provide clear explanations for the decisions made by those complex systems, with the ultimate goal of providing transparency about their behavior in both normal and abnormal scenarios. We aim to improve the trust in those systems by, for example, anticipating, explaining, and eliminating biases that could lead to incidents.

Team presentation

The valeo.ai center spearheads AI research and applications applied to the automotive industry. With excellent skills, our teams include experts in generative AI and multimodal understanding, computer vision and scene interpretation, machine learning (Core Machine Learning) and predictive and uncertainty modeling.

Meet our team

-

R&I Technical Engineer Florent Bartoccioni

-

Research Scientist Victor Besnier

-

Research Scientist Alexandre Boulch

-

Senior Research Scientist Andrei Bursuc

-

PhD student Amaia Cardiel

-

Ph.D. student Loick Chambon

-

Research Scientist Mickaël Chen

-

Scientific Director Matthieu Cord

-

Research Scientist Spyros Gidaris

-

Research Scientist David Hurych

-

Ph.D student Victor Letzelter

-

Principal scientist Renaud Marlet

-

PhD student Tetiana Martyniuk

-

Ph.D. student Björn Michele

-

Project manager Serkan Odabas

Project manager

AI Norms, Regulations, Standardization | Management

Sorbonne Université | Inria

Gamer

-

Research Scientist Gilles Puy

-

Research Scientist Nermin Samet

-

Ph.D. student Corentin Sautier

-

PhD student Sophia Sirko-Galouchenko

-

Senior scientist Eduardo Valle

-

Research scientist Tuan-Hung Vu

-

Research Scientist Yihong Xu

-

Research Scientist Eloi Zablocki

Collaborative projects

Confiance.ai

Confiance.ai is a technological research program aimed at securing, certifying, and enhancing the reliability of artificial intelligence (AI) systems. The program, launched by the Innovation Council, focuses on developing methods and tools for industrial players to engineer and deploy AI-based systems. With a strong ambition to break down barriers associated with AI industrialization, Confiance.ai addresses the scientific challenges of trustworthy AI and provides tangible solutions for real-world deployment. The program adopts a strategy of progressive advancement, starting with data-based AI solutions and gradually moving to more complex problems and industrial use cases.

The project MultiTrans aims to accelerate the development and deployment of autonomous vehicles (AVs) by addressing the challenges of perception, decision, and control in open environments. The project focuses on vision-based embedded systems and proposes a novel approach to transfer learning and domain adaptation, enabling AVs to operate safely and reliably in a wider range of situations. The expected impacts and benefits of the project include advances in transfer and frugal learning, multi-domain and multi-source computer vision, and the development of a robotic autonomous vehicle model demonstrator combined with a virtual world model.

Within the joint lab with Inria, we study 2D vision and 3D perception for robust scene understanding. Our research focuses on relaxing the use of abundant data and supervision, stepping towards weak-/un-supervised vision algorithms, while providing models that are more interpretable. We primarily address autonomous driving but our research expands to a variety of indoor and outdoor applications.

The ELSA project aims to establish a virtual center of excellence on safe and secure AI technology to address fundamental challenges hindering the deployment of AI. The project will develop a strategic research agenda focusing on technical robustness, privacy, and human agency, and will tackle three grand challenges: robustness guarantees, private collaborative learning, and human-in-the-loop decision making. The initiative builds on the ELLIS network of excellence and will connect over 100 organizations and 337 fellows and scholars to drive the development and deployment of AI technology that promotes European values.

The EXA4MIND project aims to democratize access to and enable connectivity across EU supercomputing centers, allowing for innovative solutions to complex everyday problems and addressing challenges in data analytics, Machine Learning, and Artificial Intelligence at scale. The project will build an extreme data platform that combines large-scale data storage systems with powerful computing infrastructures, enabling integration with diverse data sources and supporting advanced data analysis pipelines for knowledge extraction.

Leveraging Europe’s AI talent and supercomputing infrastructure, the EU-funded ELLIOT project will develop a family of open trustworthy Multimodal Generalist Foundation Models: AI systems designed to learn general knowledge and patterns from massive amounts of data of various types – from videos, images and text to sensor signals, industrial time series and satellite feeds – and efficiently transfer the knowledge gained to different downstream tasks.